Sound is not simply noise floating in the air. It is vibration, energy, and movement—a mechanical force that travels through matter and ultimately connects us to the world around us. Understanding how sound works is essential to grasping why hearing loss occurs, how tinnitus develops, and why hearing aids need to be precisely fitted to your individual hearing profile.

For audiologists, explaining sound waves is not merely academic. It helps patients understand why they may hear someone speaking but struggle to follow the conversation, particularly in noisy environments. It clarifies why high-pitched consonants like ‘s’, ‘f’, and ‘th’ often disappear first in age-related hearing loss, and why simply turning up the volume rarely solves the problem.

In everyday life, our ability to perceive sound impacts everything from understanding speech and enjoying music to detecting alarms, traffic, and environmental hazards. When this system breaks down—even partially—the consequences extend far beyond the ear itself, affecting cognitive load, social participation, and emotional well-being.

What Is a Sound Wave?

Sound is a form of mechanical energy. When an object vibrates—whether it’s a guitar string, a car engine, or your vocal cords—it creates pressure waves that ripple outward through the surrounding medium. This medium is usually air, but sound also travels through solids and liquids, often more efficiently than through air.

These pressure waves are sound waves. They move by compressing and rarefying air molecules in a chain reaction, transferring energy from particle to particle without the particles themselves travelling far. The sound you hear from a loudspeaker across the room is not air molecules rushing toward you, but relatively energy propagating through the air already between you and the source.

Every sound wave has two fundamental characteristics: frequency and amplitude.

Frequency, measured in Hertz (Hz), determines the pitch of a sound. It refers to how many times per second the air pressure oscillates. A low-frequency sound, such as a bass drum or a truck rumbling past, vibrates slowly—perhaps 80 to 250 Hz. A high-frequency sound, like a bird chirping or a whistle, vibrates much faster—often between 2,000 and 8,000 Hz or more.

Amplitude, measured in decibels (dB), determines the loudness of a sound. It reflects the size of the pressure change in the sound wave. A whisper might register around 20–30 dB, while heavy traffic or a lawnmower can reach 80–90 dB. Prolonged exposure to sounds above 85 dB can damage the delicate structures inside your ear.

Together, frequency and amplitude give every sound its unique character. A violin playing middle C and a flute playing the same note have the same fundamental frequency, but differ in timbre due to the presence of overtones—additional frequencies that colour the sound.

The Sound Spectrum and the Human Hearing Range

Humans can typically hear sounds ranging from approximately 20 Hz to 20,000 Hz, though this upper limit declines with age and exposure to noise. This range is called the audible sound spectrum, and it represents the window through which we experience the acoustic world.

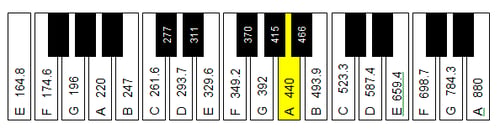

Not all frequencies are equally crucial for communication. **Speech sounds occupy a relatively narrow band**, roughly between 250 Hz and 6,000 Hz, with the most critical information for understanding speech concentrated between 1,000 Hz and 4,000 Hz. Within this range, vowels tend to be lower in frequency and louder, while consonants—particularly unvoiced ones like ‘s’, ‘f’, ‘th’, and ‘sh’—are higher in frequency and quieter.

This distribution has profound implications for hearing loss. High-frequency hearing loss is the most common pattern in both age-related (presbycusis) and noise-induced hearing loss. When the high frequencies are affected, vowels remain audible but consonants become muffled or disappear entirely. This is why people often report, “I can hear but I can’t understand.” The volume may seem adequate, but the clarity—the fine detail that distinguishes “cat” from “cap” or “sat”—is missing.

Audiologists assess hearing across a range of frequencies during a hearing test, typically focusing on the octave and inter-octave frequencies between 250 Hz and 8,000 Hz. This is not arbitrary; it reflects the speech spectrum and allows clinicians to map exactly where your hearing is intact and where it is compromised. A hearing loss affecting 4,000 Hz and above will have very different functional consequences than one affecting 500 Hz, even if the degree of loss is similar.

Age-related changes in hearing tend to follow a predictable pattern, with the highest frequencies declining first and lower frequencies following over time. Noise exposure accelerates this process, particularly affecting the 3,000–6,000 Hz region, which is crucial for consonant recognition.

How the Ear Turns Sound into Meaning

The human ear is an extraordinary piece of biological engineering. It transforms mechanical vibrations into electrical signals that the brain interprets as sound, speech, and music. This process unfolds in three anatomical stages: the outer ear, the middle ear, and the inner ear.

The outer ear consists of the pinna (the visible part) and the ear canal. The pinna helps localise sound, and the ear canal acts as a resonator, naturally amplifying specific frequencies—particularly around 2,000–4,000 Hz, which are essential for speech.

Sound waves travel down the ear canal and strike the eardrum (tympanic membrane), causing it to vibrate. These vibrations are then transferred to the middle ear, a small air-filled space containing three tiny bones known as the ossicles: the malleus (hammer), incus (anvil), and stapes (stirrup). The ossicles act as a mechanical amplifier, increasing the force of the vibrations by about 20–30 times and efficiently transferring energy from the air-filled middle ear to the fluid-filled inner ear.

The stapes connects to the cochlea, a snail-shaped structure in the inner ear filled with fluid and lined with thousands of specialised sensory cells called hair cells. As the stapes moves in and out of the oval window at the base of the cochlea, it creates pressure waves in the cochlear fluid. These waves cause the basilar membrane—a flexible structure running the length of the cochlea—to move up and down in a wave-like pattern.

Here is where frequency mapping occurs. The cochlea is **tonotopically organised**, meaning different parts respond to other frequencies. High-frequency sounds cause maximum movement near the base of the cochlea, while low-frequency sounds cause movement near the apex. This spatial organisation allows the ear to analyse the frequency content of sounds with remarkable precision.

Sitting atop the basilar membrane are the hair cells—outer and inner hair cells—each topped with tiny hair-like projections called stereocilia. When the basilar membrane moves, the stereocilia bend, opening ion channels and generating electrical signals. The inner hair cells transmit these signals to the auditory nerve, which carries them to the brain for interpretation.

Once these signals reach the auditory cortex and related brain structures, the brain decodes not just the presence of sound, but its meaning: a word, a melody, a warning. This is why clarity is not the same as loudness. Loudness relates to how much the basilar membrane moves. Clarity relates to the fine detail in the signal—the precise timing, frequency resolution, and contrast—that allows the brain to distinguish between similar-sounding words.

Critically, hair cells do not regenerate in humans. Once damaged or lost, they do not grow back. This is why noise-induced and age-related hearing loss is permanent, and why prevention and early intervention are so important.

When Sound Processing Goes Wrong

Hearing loss can occur when any part of this system is disrupted. Broadly, hearing loss is categorised as **conductive** or **sensorineural**.

Conductive hearing loss occurs when sound is blocked from reaching the inner ear. This might be due to earwax, fluid in the middle ear, a perforated eardrum, or problems with the ossicles. Conductive loss typically affects all frequencies and is often medically or surgically treatable.

Sensorineural hearing loss involves damage to the cochlea or auditory nerve, most commonly the hair cells. This type of loss is usually permanent and often affects specific frequencies more than others, particularly the high frequencies. It is by far the most common type of hearing loss in adults.

What complicates sensorineural hearing loss is not just reduced volume but reduced clarity. Damaged or missing hair cells mean fewer signals are sent to the brain, and the signals that do get through may be distorted or lack fine detail. This is why people with sensorineural hearing loss often struggle in background noise, even when the overall loudness is comfortable.

The brain also plays an active role in sound processing. Auditory processing refers to how the brain interprets, filters, and makes sense of sound signals. When the ear sends degraded information to the brain—due to hair cell loss—the brain has to work harder to fill in the gaps. Over time, this increased cognitive load can contribute to listening fatigue and social withdrawal and has been linked to accelerated cognitive decline.

Tinnitus—the perception of ringing, buzzing, or hissing without an external sound source—is another example of altered sound signalling. It is thought to arise when the brain attempts to compensate for reduced input from the cochlea, sometimes generating phantom sounds in the process. Tinnitus is frequently associated with hearing loss and can be influenced by sound therapy, hearing aids, and counselling.

Sound waves and hearing aids

How Hearing Aids Work with Sound Waves

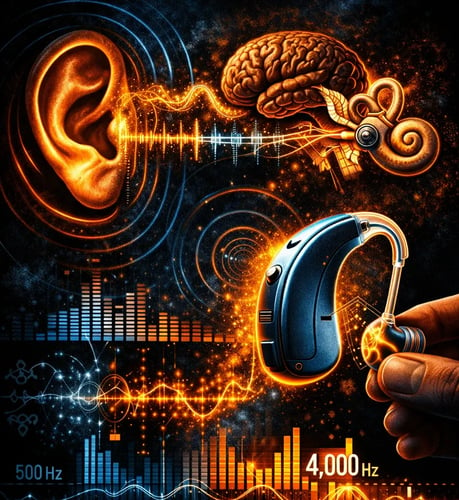

Hearing aids are sophisticated medical devices designed to work with the physics of sound and the biology of your ear. They do not simply amplify all sounds uniformly. Instead, they apply frequency-specific amplification tailored to your individual hearing loss, which is precisely why over-the-counter amplifiers or “hearing enhancers” are rarely adequate for actual hearing loss.

Modern hearing aids are digital signal processors. They continuously analyse incoming sound, breaking it down into multiple frequency bands—sometimes as many as 16. Each band can be independently adjusted to match your hearing thresholds at that frequency. If you have normal hearing at 500 Hz but a 40 dB loss at 4,000 Hz, the hearing aid applies little or no gain at 500 Hz but significant amplification at 4,000 Hz.

Speech-in-noise processing is one of the most essential functions. Hearing aids use algorithms to identify and prioritise speech while suppressing background noise. This involves spectral analysis, temporal processing, and sometimes machine learning to distinguish speech from competing sounds.

Directional microphones enhance this further by focusing on sound coming from in front of you—where conversation partners are usually located—while reducing sounds from the sides and rear. This improves the signal-to-noise ratio, making speech easier to understand in challenging environments.

Compression is another key feature. Hearing aids apply more gain to soft sounds and less to loud sounds, ensuring comfort while maintaining audibility across a wide dynamic range. Compression ratios, attack and release times, and knee points can all be adjusted during programming to suit your listening needs and preferences.

However, even the most advanced hearing aid cannot restore hearing to normal. It can improve audibility and clarity, but it cannot regenerate lost hair cells or perfectly replicate the ear’s natural frequency resolution. This is why **real-ear measurement (REM)** is so critical. REM involves placing a thin probe microphone in your ear canal to measure the hearing aid’s actual sound output at your eardrum. This verification step ensures the hearing aid is delivering the prescribed amplification based on your unique ear acoustics, which vary from person to person.

Hearing aids also allow for fine-tuning over time. As you adapt and provide feedback about different listening situations—restaurants, phone calls, meetings—your audiologist can adjust settings to optimise performance. This personalised, iterative approach is central to successful hearing aid use.

Why Understanding Sound Matters for Your Hearing Health

Grasping how sound waves work and how your ear processes them empowers you to make informed decisions about your hearing health. It explains why early testing is essential: even mild high-frequency loss can affect communication and quality of life long before it becomes evident to you or those around you.

For children, understanding sound processing is essential for language development. Undiagnosed hearing loss in early childhood can delay speech, literacy, and social skills. Early identification and intervention—whether through hearing aids, cochlear implants, or other supports—can drastically improve outcomes.

For adults, hearing loss is increasingly recognised as a modifiable risk factor for cognitive decline and dementia. Research suggests that untreated hearing loss increases cognitive load, reduces social engagement, and may contribute to brain atrophy in auditory regions. Treating hearing loss with appropriately fitted hearing aids may reduce this risk and improve overall well-being.

Finally, understanding sound underscores the importance of personalised audiology care. Every ear is different, and every hearing loss has its own profile. A cookie-cutter approach—whether through online hearing tests, mail-order devices, or inadequate follow-up—cannot account for the complexity of your auditory system or your listening needs.

At The Audiology Place, we take a comprehensive, evidence-based approach to hearing care. From diagnostic audiometry and real-ear verification to ongoing support and counselling, we ensure your hearing aids are precisely matched to your hearing and your life. Because when it comes to sound, the details matter—and so do you.